Governance and responsible AI – Bank Privee

Publiée le October 21, 2025

Publiée le October 21, 2025

Private banks process sensitive data (wealth, tax, health information) and must comply with the RGPD, local rules and the European AI Directive (AI Act). To accelerate innovation without compromising privacy, many private banks are exploring solutions for prototyping models without using real data and strengthening model governance.

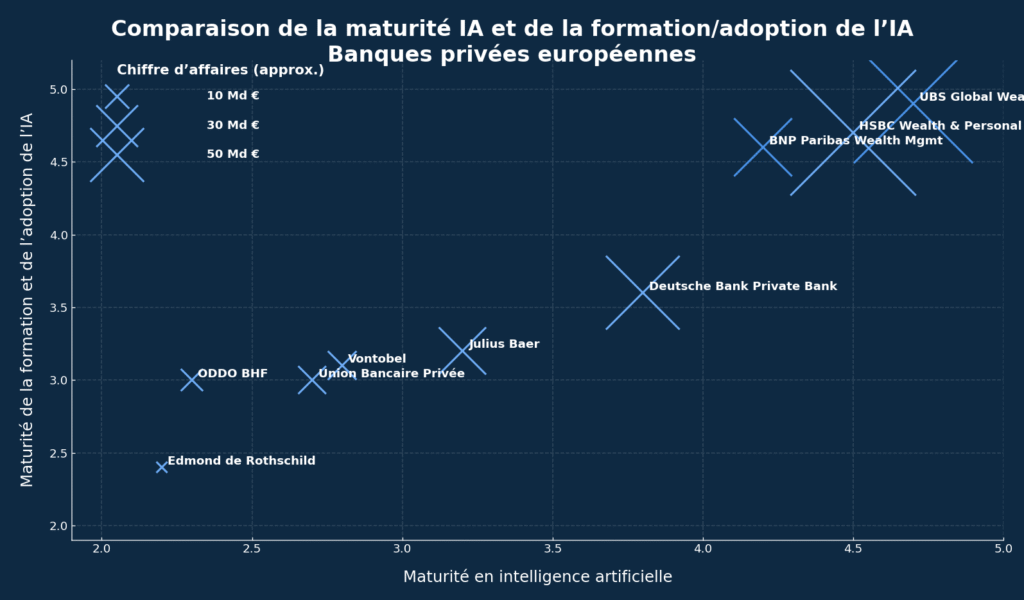

Maturity ranking of European private banks :

*Palmer Research Resources, (estimate based on public data) :

Horizontal axis (abscissa): Maturity in artificial intelligence.

This is a qualitative score (scale type 1 to 5, displayed here ~2 to 5) that synthesizes the technical level: data governance, models in production, AI tools (RAG, NLP, vision doc), IS integrations, security/RGPD, etc.

→ The further to the right, the more technically advanced the bank is in AI.

Vertical axis (ordinate): Maturity of AI training and adoption.

Another qualitative score (same type of scale) that measures actual diffusion in teams: training of advisors and support functions, usage rates, change management, processes, compliance checks, etc.

→ The higher you go, the more AI is used and mastered by teams.

In November 2024, analytical software specialist SAS acquired Hazy‘s synthetic data technology. The aim is to integrate synthetic data generation into the SAS Viya platform, enabling companies to create datasets that faithfully represent the original statistical distributions without exposing any identifiable information. SAS CEO Jim Goodnight points out that this best-in-class technology will enable customers to “harness data safely and effectively, enabling them to experiment and model scenarios previously out of reach”. IDC sees synthetic data as a “game changer” for sectors subject to strict regulations, such as healthcare and finance.

Meanwhile, in January 2025, Austrian start-up MOSTLY AI launched an open source SDK for locally generating synthetic data. The tool enables private differential data generators to be trained and shared datasets to be produced without divulging sensitive information. The SDK features state-of-the-art models (TabularARGN, LSTM) and built-in fidelity and confidentiality metrics.

Data contracts: clearly define who can access what data and for what purposes. AI projects must be based on anonymized or synthetic datasets, with access logs.

Synthetic data catalog: for each use case (internal chatbot, recommendation engine, scoring test), produce a synthetic twin of the real game. Synthetic data enriched by SAS Data Maker or MOSTLY AI can be shared between teams without the risk of violating privacy.

Model cards: document the objective, data sets used (synthetic or real), performance, potential biases and mitigation measures. Alignment tests ensure that the model does not offer investment advice without all the necessary information.

Monitoring and audits: set up continuous monitoring to detect drift. An internal “red team” can carry out prompt injection attacks or adversarial tests to measure system resilience.

Cross-functional AI committee: involving risk management, compliance, legal and business units to validate new AI projects. This committee assesses benefits and risks, approves the use of synthetic data and sets rules for experimentation.

Prototype a co-pilot: generate a synthetic set of customer documents and internal notes, in order to develop a RAG model without exposing personal data. Synthesis tests are used to adjust the architecture and validate the relevance of responses before deployment.

KYC/AML algorithm testing : create fictitious scenarios, including complex structures and on-chain movements, to train models to recognize fraud patterns without mobilizing real customer accounts.

Simulation of customer behavior: in the context of personalization (article 3), synthetic data is used to simulate the impact of a recommendation (investing in an AI fund, taking out life insurance) and to estimate the customer’s reaction without using real data.

Technology and banking companies are accelerating the adoption of synthetic data. SAS predicts that by 2026, 75% of companies will be using generative AI to produce synthetic customer data, compared with less than 5% in 2023. The release of the MOSTLY AI SDK shows that the open source community is getting to grips with the issue. In the private banking sector, players such as BNP Paribas and UBS are equipping themselves with sandboxes where models are trained on synthetic data to avoid any risk of leakage and to comply with the RGPD.

The implementation of robust data governance and the adoption of data synthesis represent a quantum leap for private banks. The acquisition of Hazy by SAS and the opening of the MOSTLY AI SDK show that the industry is getting organized to provide tools that respect confidentiality. By combining these approaches with a rigorous governance framework (data contracts, model cards, AI committee), private banks can accelerate innovation while protecting their customers and complying with regulations.