Transform vs. older NLP models

Transforming vs. legacy language processing models

Publiée le September 24, 2025

Transforming vs. legacy language processing models

Publiée le September 24, 2025

Central mechanism:self-attention

→ The model “looks” at all the words in parallel and learns which relationships are important, even at long distance.

Massive parallelization: no strictly sequential processing as in Recurrent Neural Networks (RNN ) → much faster training on Graphics Processing Units(GPU) and Tensor Processing Units(TPU).

Long context: handles large contextual windows (thousands of tokens), where RNN and variants lose remote memory.

Scale (scalability): scales very well (parameters, data, computation) → hence modern Large Language Models(LLMs).

Flexibility: extends to multimodal (text, image, audio), in-context learning in-context learning and efficient refinement/fine-tuning.

Limits still true on the Transformer side

Quadratic cost with context length (“classic” attention) → high memory and computation.

High data and computation requirements for very large models.

Less local inductive bias than Convolutional Neural Networks (CNN), which naturally capture local patterns.

RNN – Recurrent Neural Networks: word-by-word processing; difficulty with long-term memory(vanishing/exploding gradients).

LSTM – Long Short-Term Memory: adds gates for better memory → long the state of the art in translation and speech.

GRU – Gated Recurrent Unit: a lighter variant of the LSTM.

Seq2Seq – Sequence-to-Sequence with attention (Bahdanau/Luong): the first big leap in translation;attention is a module, not the entire architecture.

CNN/ConvS2S – Convolutional Neural Networks / Convolutional Sequence-to-Sequence: locally parallelizable, good on local patterns, less at ease with very long dependencies.

(WaveNet for audio: generative convolutional architecture).

n-gram models (counting language models),

HMM – Hidden Markov Models for sequence labeling,

CRF – Conditional Random Fields for structured labeling,

PCFG – Probabilistic Context-Free Grammars.

→ Little semantic understanding, strong feature engineering, limited performance.

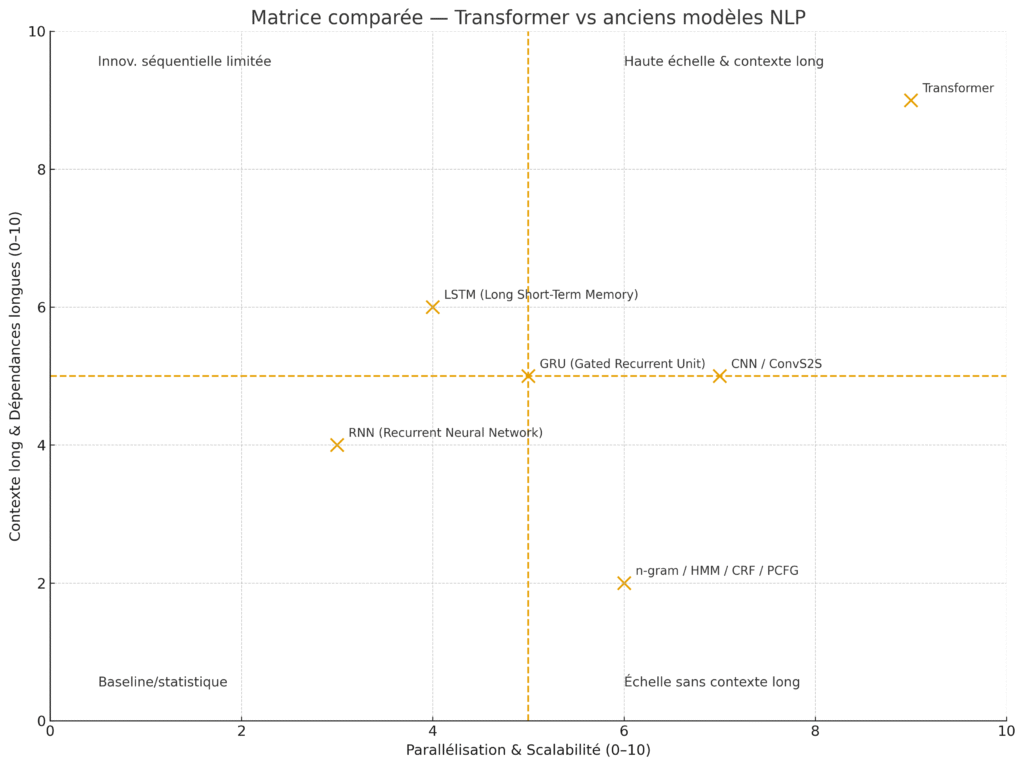

| Dimension | Transformer | RNN/LSTM/GRU | CNN/ConvS2S | n-gram/HMM/CRF/PCFG |

|---|---|---|---|---|

| Processing | Parallel (self-attention) | Sequential (recurrent hidden state) | Local parallel (filters) | Counting/statistics |

| Long outbuildings | Excellent | Difficult (gradients) | Medium | Weak |

| Drive speed | High (GPU/TPU-friendly) | Slower | High | High |

| Long context | Large window (↑ tokens) | Limited | Limited-medium | Very limited |

| LLM Scalability | Very good | Limited | Average | N/A |

| Data requirements | High | Lower | Low | Low |

| Memory/compute cost | High (attention) | Moderate | Moderate | Low |

| Local inductive bias | Weaker | – | Stronger | – |

Strong resource constraints (embedded/edge, small datasets) → GRU/LSTM remain relevant.

Dominant local patterns (small sequences, regular patterns) → CNN/ConvS2S efficient, simple and fast.

Historical labeling pipelines (little data, need for interpretability) → CRF/HMM still useful.